On the first day of the Symposium on Geometry Processing (SGP), I was excited to learn two things: how welcoming the geometry processing community is and different techniques for mesh stylization.

First, it was relieving to me when joining the ice-breaker events on the first day of SGP to learn how welcoming the community is to attendees from all levels. At first, I was scared to introduce myself as an undergraduate student who is new to this discipline and am going to work in SGI as a summer fellow. However, the graduate students, postdocs, and professors were really welcoming, and I was excited to see some of the mentors in the breakout rooms. Through conversations with other Ph.D. students, professors, and engineers in non-academic disciplines, I realized how people from different backgrounds can all contribute to this field. This eased my nervousness from the past two days and motivated me to explore more about geometry processing through SGP!

Second, I would like to highlight two talks in mesh stylization given by Maximilian Kohlbrenner and Hsueh-Ti Derek Liu, respectively, titled “Gauss Stylization: Interactive Artistic Mesh Modeling based on Preferred Surface Normals” and “Normal-Driven Spherical Shape Analogies”. To begin with, a style is a “distinctive manner which permits the grouping of works into related categories” (Fernie 1995, referenced in Liu’s talk). In geometry processing, stylization tools take a piece of geometry and reshape it to have a distinctive appearance. Some elements of style are straightforward, such as shapes, proportions, and lines. One previous stylization method is cubic stylization, whose objective function sums an as rigid as possible (ARAP) energy term and a “cubeness” term with a scalar weight \(\lambda\).

In Kohlbrenner’s talk, he discussed Gauss stylization, which subtracts cubeness from the ARAP energy instead of adding them together. Then, they reformulate their energy by decoupling the normals such that there are 3 sets of variables, leading to an ARAP-like optimization method. It was difficult for me to follow the details, but this is the big scale picture I learned from the talk. It is interesting to me not only because all of these are new but also because being able to see the new shapes they create from Gauss images. It would not occur to me how editing surface normals can change the look or use of a piece of geometry so much.

Liu introduced another normal-driven algorithm to stylize different shapes. The main idea of his 3-step algorithm works as follows. First, he matches the sphere to a style template. Second, he matches the sphere to an input shape. Last, he does deformation (optimization) through a normal-based method. His work uses two different constructions related to shape matching and editing: the Gauss map and curvature flow. As introduced above, Gauss maps go from every point on a surface to its normal on the unit sphere, which can be edited to express a transformation of a surface. After describing the basic steps of his method, Liu discussed the difficulty of the optimization process and his approach. The difficulty lies in the fact that the equations are nonlinear. However, with a change of variables, the equations show how some of his variables can be computed using a single SVD while others can be computed by solving a linear problem. Just like Kohlbrenner’s method, Liu’s algorithm alternates between these two steps to optimize. This research is interesting to me as this algorithm not only achieved the initial goal but also can be used in practice. In the end, he also discussed a lot of extensions and applications that are possible to explore or learn. Some include applying other energy terms (instead of the ARAP one), polycube, and geometric texture.

I really enjoyed these two lectures, which introduced Gauss maps and other related shape synthesis ideas. The contents motivate me to explore more about mesh stylization and I am excited to learn more about them in the future.

Is It A Donut or Is It A Mug?

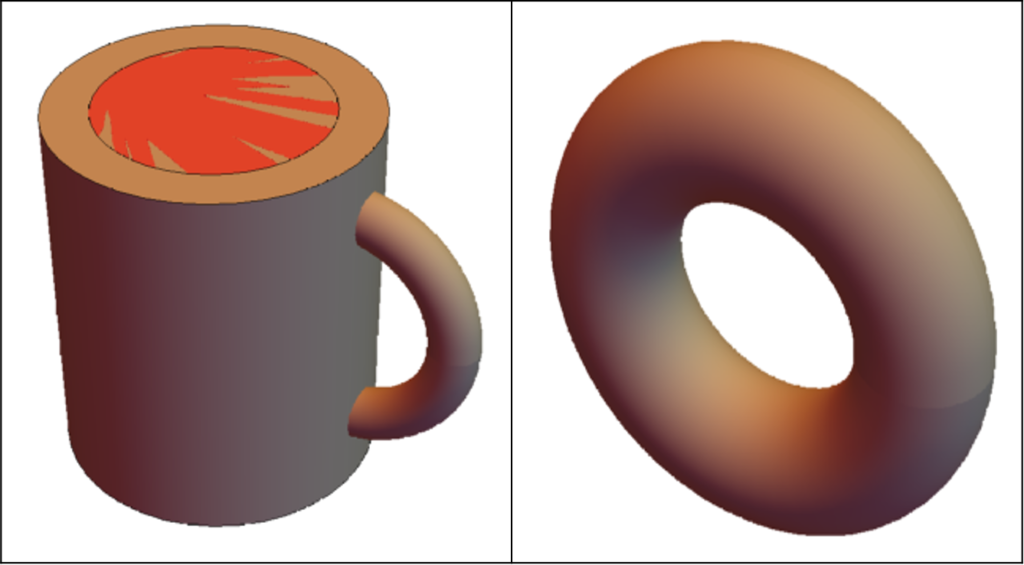

There is a famous saying – A topologist is a person who can’t differentiate between a donut and a mug. At the first glance, this might sound very confusing… Donuts and mugs are two very different objects, so how can someone not be able to tell them apart? Well, to realize this joke, we first need to understand homeomorphisms. In this post, we will try to dig deeper into this concept, and then we will talk briefly about surface-to-surface mapping.

Before diving into the theoretical explanation, let us elucidate the matter with some interesting visualizations. Below is a picture of a basic 3D mug and a donut drawn in Wolfram Mathematica. (Disclaimer: The objects may not look very attractive, but let’s keep it this way for simplification)

Now, if we look closely, we can change (or deform) the mug into a donut shape. The related code snippet and the deformation are shown below:

We can also go back to our original mug shape from the donut shape. So, we can say that this change is invertible and bijective:

So far, we have seen that we can deform a mug into a donut and vice versa. In other words, we can say there is a map between these two shapes. In mathematical definition, this phenomenon is known as homeomorphism. To be more concise, two shapes are called to be homeomorphic when there is a map between them that is continuous, invertible, and bijective.

Now that we have a basic understanding of homeomorphism, let’s dive deeper into this matter. In the donut and mug example above, we manually designed a deformation function that mapped between these objects. This map will not always work for every other shape we encounter. Moreover, it will be very tedious to manually find every map between all possible pairs of shapes. So, we need to find a generic solution that will work for a larger class of shapes. In geometric terms, this task is commonly referred to as “Surface-to-Surface Mapping”. This task is especially useful for a wide range of geometric applications, such as shape correspondence, texture transfer, layout transfer, and abstract layout embedding.

In the SGP 2021 Graduate School, there was a session titled “Maps Between Surfaces” by M. Campen and P. Schmidt, where the authors described different methods of surface-to-surface mapping in detail. I am deeply intrigued by their talk, and much of the later part of this post has been inspired by their talk.

To understand the concept of surface-to-surface mapping, we need to understand plane-to-plane mapping and surface-to-plane mapping first. In simple terms, plane-to-plane mapping is a homeomorphic map that takes a 2D plane to a different 2D plane. Similarly, surface-to-plane mapping is a homeomorphic map that takes a surface (represented as a triangular mesh) to a 2D plane. But, this might not always be possible for non-disk surfaces. For example, no matter how much we try, a homeomorphic map can’t be found between a 3D sphere and a plane. In such cases, we will use a cut graph to simplify our 3D surface representation.

We are now ready to look at different representation techniques of surface-to-surface mapping:

- Deduction to surface-to-plane mapping (for smooth surface): We can deduce the problem to a simpler surface-to-plane mapping problem. That is, surface A would be mapped to a plane C, and C would be mapped to the other surface, B.

- Vertex to ambient map: In this map, we store the corresponding 3D coordinate of each vertex of surface A.

- Vertex to surface map: Instead of directly storing the 3D coordinate, we store the corresponding triangle ID and relative position on surface B for each vertex of A.

- Vertex to vertex map: For every vertex of surface A, we store a vertex of surface B.

- Functional map: In this setting, we represent the mapping using low-frequency functions (for example, from a Laplacian eigenbasis of the mesh).

However, each of these settings has some disadvantages. The common problem is that all of these maps are only storing information for vertices of A, but not other points on A (such as the points that lie on the edges). Hence, it might be difficult to find the inverse map from surface B to surface A. In other words, these maps are not bijective. For defining perfect homeomorphisms, we can take inspiration from Gauss-Bonnet Theorem. Using this theorem, we can map shapes with 0, 1 and greater than one genus to sphere, plane and hyperbolic plane using Poincare model, Beltrami-Klein model, and hyperboloid model, respectively, so that bijectivity is ensured.

Apart from homeomorphism, a surface-to-surface map must also abide by some other constraints: it has to ensure a low distortion rate, abide by semantic and topological constraints. These constraints can be satisfied by following a two-step approach, consisting of map initialization and map optimization. The authors also described in detail how this approach works. As this is out of the scope of this post, we will not look deeper into those techniques. Interested readers are encouraged to watch their full session here: https://youtu.be/jMWJ79EpyfQ.

Reporting from the SGP Graduate School

SGI Fellows were registered for and invited to attend the Symposium on Geometry Processing (SGP), the premier venue for disseminating new research ideas and cutting-edge results in geometry processing.

I am writing this from the comfort of the EST time zone and from a boring but quiet dorm room. The Symposium on Geometry Processing (SGP) has successfully reminded me of the existence of a world outside my current location – a remarkable feat, especially given the forced geographic sedentarism of the past year and a half. Plenty of SGP attendees were active, participating, asking questions, and being engaged, despite it being late at night or very early in their time zones. Many other people will watch the SGP recordings on Youtube in the upcoming days. The concluding remark of “have a great day, or afternoon, or evening, depending on where you are right now” gave insight into precisely how geographically widespread the geometry processing community is.

Before delving further, some context is warranted: SGP is a yearly conference where people disseminate new results and ideas placed at the enticing intersection of theory and applications of mathematics, computer science, engineering, and other subjects. This year’s event is divided into the Graduate School (July 10-11) and the Conference (July 12-14). As I am writing this, it is July 11, so I have only attended the Graduate School events so far.

Out of the talks I have attended, I want to focus on the Introduction to Geometry Processing Programing in MATLAB with gptoolbox. It is a fantastic tutorial prepared and presented by Hsueh-Ti Derek Liu, Silvia Sellán, and Oded Stein, and advised by Alec Jacobson. The tutorial is comprehensive and explains how things fit together in a bigger context. Furthermore, it provides the possibility for hands-on Matlab experience, which comes with solutions in case you get stuck.

For context, gptoolbox is a set of Matlab functions for geometry processing, aimed to make things easier and to prevent researchers from reinventing the wheel. I will tell you three new things I took away from the tutorial. This is, of course, not to say that there were only three things one could take away! In fact, I encourage you to watch the video and try to find more.

First, it was great to see how “from-the-ground-up” the teaching approach was. I prefer to prevent, rather than fix, technical crises, and the introductory bits of Matlab knowledge (such as: if you want to suppress the output of a statement, terminate the statement with a semicolon) were very welcome. I spent more evenings than I would like to admit trying to compile non-working code, only to realize – by trial-and-error – that my mistake was fixable in 3 seconds. As such, explicitly stating things, with no assumption of prior knowledge, was great.

My favorite part from Oded’s section was learning how to give objects shadows, as well as playing with the way light is reflected from the surface of the object. The tutorial covered techniques based on the Phong reflection model, so I look forward to learning additional approaches and more nuanced techniques.

Second, I was especially intrigued by the part on spectral conformal mapping in Derek’s section. Not only do we get to see the theoretical description of a geometric process, the knowledge of how important the subroutine is, and the paper it is first described in, but we also get a visual representation on how the algorithm works. We get a “before” and “after” picture of a shape that is familiar to us, alongside theoretical descriptions of mathematical objects. It turns out, you CAN have the best of both (mathematical) worlds!

Third, I was especially fascinated by the ability to triangulate a 2D shape in just two lines of code with the help of the get_pencil_curve function. The idea is that you can “call” the function and draw any shape you would like, using your mouse click as the line input. First, Silvia illustrated the need for such a tool before introducing it. But also, after triangulating the figure, she outlined – for comparison – the work one might need to do to get the same result had we not had gptoolbox. The alternative included at least two other programs and the word “export” multiple times, which not only sounds like a lot of work, but also like a logistical nightmare.

As a newcomer to geometry processing, it was good to see the dedication to knowledge exchange and the great efforts made to be inclusive towards the largest possible number of people. I spent part of this summer watching geometry processing courses to bring myself up to speed with the discipline, and my brain was ecstatic when it recognized concepts I had studied earlier, or when it was able to make connections on its own. The existence of the Graduate School made me only more excited for what is in store for the rest of the conference and for the rest of the summer. So, I am keeping my brain open for all the knowledge acquisition that will undoubtedly occur during SGP and, afterwards, during Summer Geometry Institute (SGI).

Lastly – the Graduate School at SGP gave me an idea of the level of growth I will experience in the coming weeks, first at the SGP talks, and – next – at SGI. Not only will I learn a lot, but it will also be fun and incredibly rewarding! I think it will be especially interesting to put this post side by side with one I will write closer to the end of the SGI program, and to compare the difference marked by a few weeks of intensive learning.

Welcome to SGI 2021

Welcome to the official blog of the Summer Geometry Institute 2021, to be held July 19 to August 28, 2021. 🎉🎉🎉 I am writing this initial post to introduce our program and to share a few of our plans for this summer.

First, a quick introduction. I’m Justin Solomon, an associate professor of Electrical Engineering and Computer Science (EECS) at MIT and organizer of SGI 2021. I lead the MIT Geometric Data Processing group, which studies problems at the intersection of geometry, large-scale optimization, and applications in areas like graphics and machine learning.

SGI is the result of discussions among a worldwide network of geometry processing researchers, which started during the 2020 Symposium on Geometry Processing (SGP)—which, like many conferences in 2020, was held online for the first time. While we were sad not to see each other in person at a conference center in Utrecht, the online format format actually allowed SGP to reach a broader and more geographically diverse audience than ever before. This helped us realize that we should be creating similar opportunities for students and early-stage researchers to enter geometry processing research, even if they do not opportunities to try this discipline at their home institutions. This led us to design SGI, a summer research program designed to introduce a broad pool of students to geometry processing research through immersive interaction with top researchers in the discipline.

SGI aims to accomplish the following objectives:

- spark collaboration among students and researchers in geometry processing,

- launch inter-university research projects in geometry processing involving team members across broad levels of seniority (undergraduate, graduate, faculty, industrial researcher),

- introduce students to geometry processing research and development, and

- diversify the “pipeline” of students entering geometry processing research, in terms of gender, race, socioeconomic background, and home institution.

In addition to its research goals, SGI aims to address a number of challenges and inequities in the geometry processing discipline. Not all universities host faculty whose work touches on this emerging discipline, reducing the cohort of students exposed to this discipline during their undergraduate careers. Moreover, as with many engineering and mathematical fields, geometry processing suffers from serious gender, racial, and socioeconomic imbalance.

So, we set to work to launch SGI by summer 2021. We obtained funding from a number of generous sponsors across industry and academia, listed here. Last January, we posted an application, and by the February 15 deadline we received 627 applications! A careful review process led us to narrow down to a cohort of 35 (paid) SGI Fellows, a brilliant, diverse, and enthusiastic group of early-stage researchers; we also invited a second cohort of students to participate in our initial week of geometry processing tutorials. We were blown away by the enthusiasm and breadth of backgrounds/stories we encountered among our applicants, and our group of Fellows includes participants across many time zones and educational institutions.

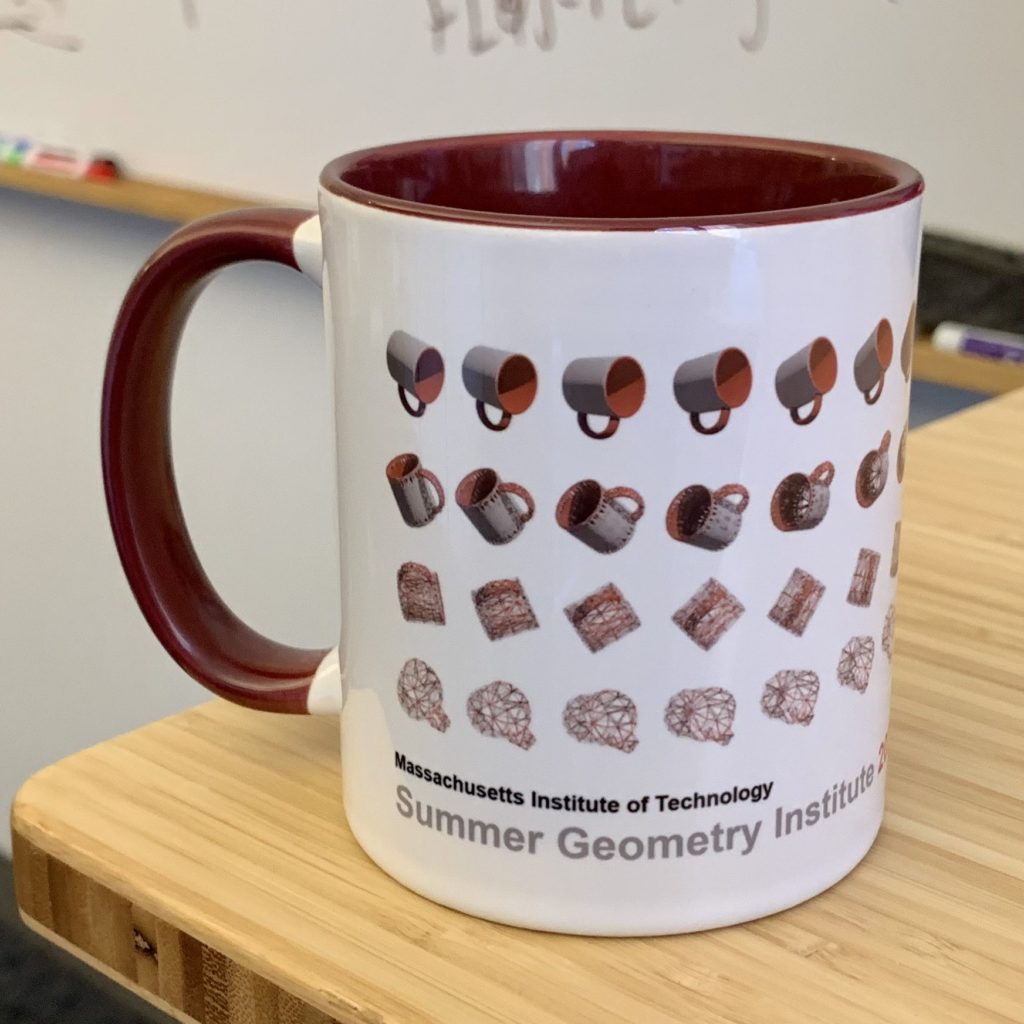

Now that SGI is approaching in just a few weeks, we’re digging into the details of organizing this large, decentralized program. We’ve set up a shared Slack environment, confirmed 15 guest speakers, and tested online videochat tools. A team of SGI Fellows led by our student Lucas designed a custom-printed coffee mug to be distributed to the SGI Fellows, mentors, and volunteers. Just last week, my graduate students, postdocs, and I packed 72 packages to be mailed around the world with these mugs, as well as other swag provided by our program sponsors for the Fellows.

SGI kicks off in roughly two weeks, and it will happen in two phases:

- In the first week, our Fellows (plus additional invited participants) will participate in tutorials led by a team of geometry processing experts, designed to introduce them to the big ideas and scientific techniques encountered in geometry processing research. Each day is led by a different researcher: Oded Stein (MIT), Silvia Sellán (U of Toronto), Hsueh-Ti (Derek) Liu (U of Toronto), Michal Edelstein (Technion), and Amir Vaxman (Utrecht University).

- In the remaining five weeks, the students participate in short-term research projects. Each project lasts 1-2 weeks and is led by a geometry processing expert. We have over 30 project mentors, who have proposed projects across a variety of applications, from machine learning on triangle meshes to discrete differential geometry to Bayesian inference. Each project is worked on intensively by 4-8 students, who interact day-to-day through digital environments, shared repositories, and so on. Our program will be interspersed with guest speakers from industry and research, as well as panel discussions on graduate school admissions, research techniques, and other topics.

On this blog, the SGI students and team members will share their progress. Our goal is to share technical insights gained by our student as well as progress of the program itself. We invite interested parties to subscribe, so they can receive day-to-day updates as we train the next generation of geometry processing experts!