In this blog, we explain how we can train SIREN architecture on a 3D point cloud (Dragon object), from The Stanford 3D Scanning Repository. This work had been done during the project “Implicit Neural Representation (INR) based on the Geometric Information of Shapes” SGI 2022, with Alisia Lupidi and Krishnendu Kar, under the guidance of Dr. Dena Bazazian and Shaimaa Monem Abdelhafez.

Introduction

The SIREN architecture is a neural network with a periodic activation function that has been proposed to reconstruct 3D objects, and considered as a signed distance function (SDF). We train this network using a colab notebook to reconstruct a Dragon object, which we take from The Stanford 3D Scanning Repository. We provide the instructions to produce our experiments.

Note: you have to use a GPU for this experiment. If you use Google Colab, you just set your runtime to GPU.

Instructions to run our experiments

First, you have to clone the SIREN repository in your notebook using the code below,

git clone https://github.com/vsitzmann/sirenAfter cloning the repository, install the required libraries by:

pip install sk-video

pip install cmapy

pip install ConfigArgParse

pip install plyfileThen, you can download the Dragon object from The Stanford 3D Scanning Repository (you can also try another 3D object). The 3D object has to be converted to xyz format, for which you can use MeshLab.

The next step is to train the neural network (SIREN) to reconstruct the 3D object. You can achieve this task by running the following script:

python experiments_scripts/train_sdf.py --model_type=sine --point_cloud_path=<path_to_the_Dragon_in_xyz_format> --batch_size=25000 --experiment_name=experiment_1Finally, we can test the trained model and use it to reconstruct our Dragon by running,

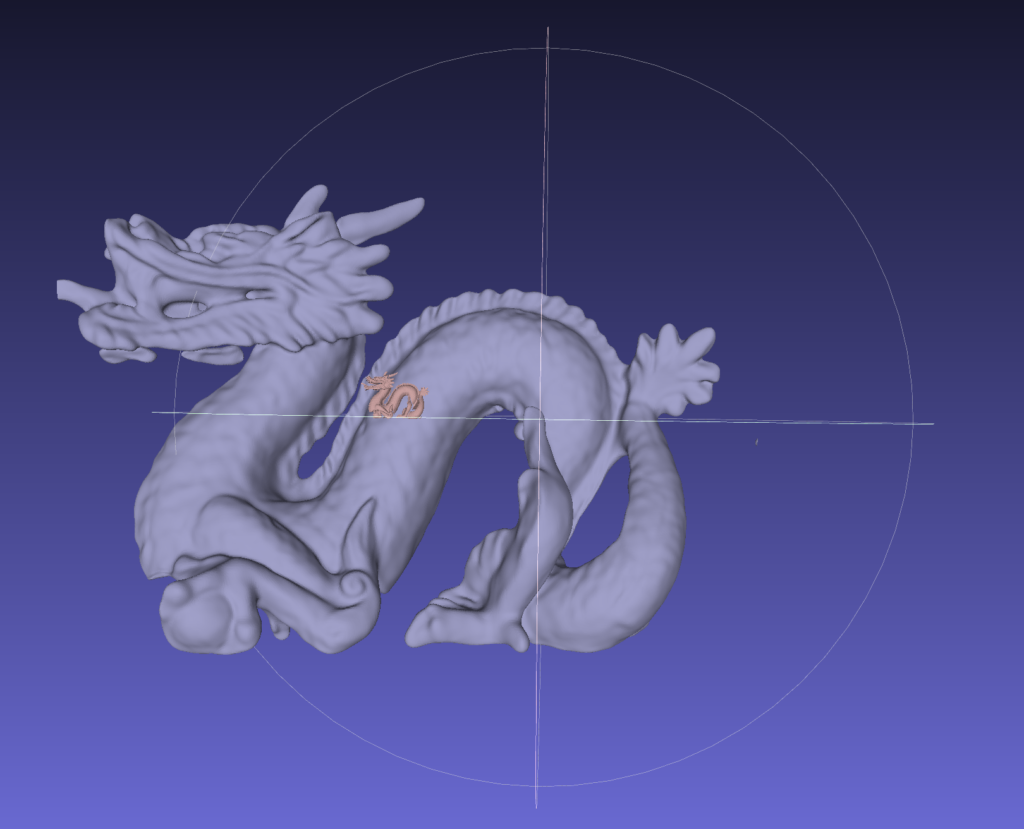

python experiments_scripts/test_sdf.py --checkpoint_path=<path_to_the_checkpoint_of_the_trained_model> --experiment_name=experiment_1_rec --resolution=512The reconstructed point cloud file will be saved in the folder “experiment_1_rec”. Here is also visualization for the reconstructed Dragon (in gray) wrt to the original one (in brown) using MeshLab. Where you can notice the reconstructed version has a larger scale.