written by Nursena Koprucu and Ruyu Yan

Introduction

In the first week of project SE(3) Invariant and Equivariant Neural Network for Geometry Processing, we studied the research approach frame averaging that tackles the challenge of representing intrinsic shape properties with neural networks. Here we introduce the paper Frame Averaging for Invariant and Equivariant Network Design[1] which grounded the theoretical analysis of generalizing frame averaging with different data types and symmetry groups.

Motivation

Deep neural networks are useful for approximating unknown functions with combinations of linear and non-linear units. Its application, especially in geometry processing, involves learning functions invariant or equivariant to certain symmetries of the input data. Formally, for a function \(f\) to be

- invariant to transformation \(A\), we have \(f(Ax)=f(x)\);

- equivariant to transformation \(A\), we have \(f(Ax)=Af(x)\).

This paper introduced Frame Averaging (FA), a systematic framework for incorporating invariance or equivariance into existing architecture. It was derived from the observation in group theory that

Arbitrary functions \(\phi:V\rightarrow\mathbb{R}, \Phi:V\rightarrow W\), where \(V, W\) are some vector spaces, can be made invariant or equivariant by symmetrization, that is averaging over the group.

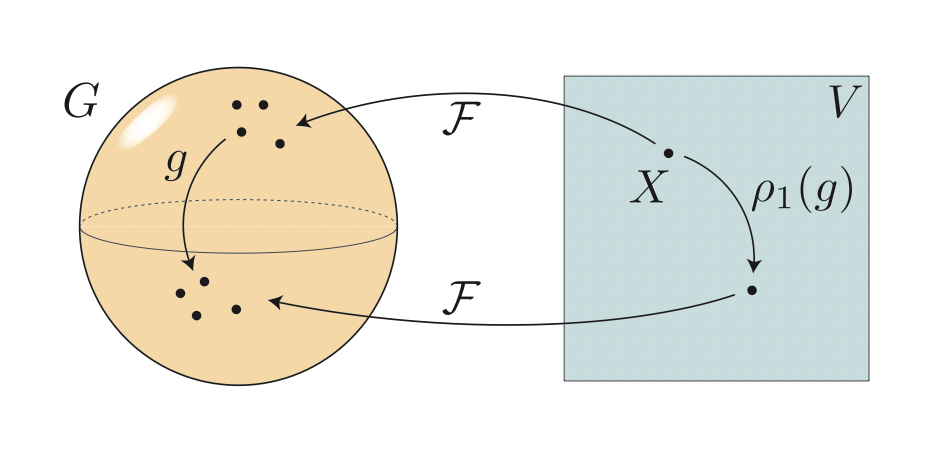

Instead of averaging over the entire group \(G\) with potentially large cardinality, where exact averaging is intractable, the paper proposed an alternative of averaging over a carefully selected subset \(\mathcal{F}(X)\subset G\) such that the cardinality \(|\mathcal{F}(X)|\) is small. Computing the average over \(\mathcal{F}(X)\) can efficiently retain both the expressive power and the exact invariance/equivariance.

Frame Averaging

Let \(\phi\) and \(\Phi\) be arbitrary functions, e.g. neural networks.

Let \({G}\) be a group with representation \({p_i}\)’s that preserves the group structure by satisfying \({p_i}(gh)= {p_i}(g){p_i}(h)\) for all \({g}, {h}\) in \({G}\).

We want to make

- \(\phi\) into an invariant function, i.e. \(\phi(p_1(g){X})= \phi({X})\); and

- \(\Phi\) into an equivariant function, i.e. \(\Phi(p_1(g){X})= {p_2(g)}\Phi({X})\).

Instead of averaging over the entire group each time, we will do that by averaging over group elements. Specifically, we will take the average on a subset of the group elements – called a frame.

A frame is defined as a set valued function \(\mathcal{F}:V\rightarrow\ {2^G \setminus\emptyset}\).

A frame is \(G\)-equivariant if \(F(\rho_1(g)X) = gF(X)\) for every \(X \in V\) and \(g \in G\) where\(gF(X)= \{gh|h \in F(X)\}\) and the equality means that the two sets are equal.

Why are the equivariant frames useful?

If we have a case where the equivariant frame is easy to compute, and its cardinality is not too large, then averaging over the frame, provides the required function symmetrization. (for invariance and equivariance).

Frame Averaging

Let \(\mathcal{F}\) be a \({G}\) equivariant frame, and \(\phi:V\rightarrow\mathbb{R}, \Phi:V\rightarrow W\) be some functions.

Then, \(\langle \phi \rangle _F\) is \(G\) invariant, while \(\langle \Phi \rangle _F\) is \(G\) equivariant.

Incorporating G as a second symmetry

Here, we have \(\phi, \Phi\) that is already invariant/equivariant w.r.t. some symmetry group \(H\). We want to make it invariant/equivariant to \(H × G\).

Frame Average second symmetry

Let \(\mathcal{F}\) be a \({G}\) equivariant frame, and \(\phi:V\rightarrow\mathbb{R}, \Phi:V\rightarrow W\) be some functions.

Then, \(\langle \phi \rangle _F\) is \(G\) invariant, while \(\langle \Phi \rangle _F\) is \(G\) equivariant.

Assume \(F\) is \(H\)-invariant and \(G\)-equivariant. Then,

- If \(\phi:V\rightarrow\mathbb{R}\) is \(H\) invariant, and \(ρ_1,τ_1\) commute, then \(\langle \phi \rangle _F\) is \(G×H\) invariant.

- If \(\Phi:V\rightarrow W\) is \(H\) equivariant and \(ρ_i, τ_i, i = {1,2}\), commute then \(\langle \Phi \rangle _F\) is \(G×H\) equivariant.

Efficient calculation of invariant frame averaging

We can come up with a more efficient form of FA in the invariant case.

The stabilizer of an element \(X∈V\) is a subgroup of \(G\) defined by \(G_X =\{g∈G∣ρ_1(g)X=X\}.\) \(G_X\) naturally induces an equivalence relation ∼ on \(F(X)\), with \(g ∼ h ⇐⇒ hg^{−1} ∈ G_X.\) The equivalence classes (orbits) are \([g] = {h ∈ F(X)∣g ∼ h} = G_Xg ⊂ F(X), for g∈F(X)\), and the quotient set is denoted \(F(X)/G_X.\)

Equivariant frame \(F(X)\) is a disjoint union of equal size orbits, \([g] ∈ F(X)/G_X\).

Approximation of invariant frame averaging

When \(F(X)/G_X\) is hard to enumerate, we can draw a random element from \(F(X)/G_X\) with uniform probability.

Let \(F(X)\) be an equivariant frame, and \(g ∈ F(X)\) be a uniform random sample. Then \([g] ∈ F(X)/G_X\) is also uniform.

Hence, we get an efficient approximation strategy that is averaging over uniform samples.

Model Instances

An instance of the FA framework contains:

- The symmetry group \(G\), representations \(\rho_1,\rho_2\) , and the underlying frame \(\mathcal{F}\).

- The backbone architecture for \(\phi\) (invariant) or \(\Phi\) (equivariant).

Example: Normal Estimation with Point Cloud

Here we use normal estimation with point cloud data as an example. The goal is to incorporate \(E(d)\) equivariance to the existing backbone architecture (e.g. PointNet and DGCNN).

Mathematical Formulation

The paper proposed the frame \(\mathcal{F}(X)\) based on Principal Component Analysis (PCA). Formally, we present the centroid of \(X\) by

$$t=\frac{1}{n}X^T\mathbf{1}\in\mathbb{R}^d $$

$$t=\frac{1}{n}X^T\mathbf{1}\in\mathbb{R}^d $$

and covariance matrix computed after centering the data is

$$C=(X-\mathbf{1}t^T)^T(X-\mathbf{1}t^T)\in\mathbb{R}^{d\times d} $$

Then, we define the frame by

$$\mathcal{F}(X)=\{([\alpha_1v_1, …, \alpha_dv_d], t)|\alpha_i\in\{-1, 1\}\}\subset E(d) $$

where \(\mathbf{v}_1, …, v_d\) are the eigenvectors of \(C\). From that, we can form the representation for the frames as transformation matrices.

$$\rho(g)=\begin{pmatrix}R & t \\\mathbf{0}^T & 1\end{pmatrix}, R=\begin{bmatrix}\alpha_1v_1 &…&\alpha_dv_d\end{bmatrix} $$

Implementation

We show part of the implementation of normal estimation for point cloud in PyTorch. For more details, please refer to the open-source code provided by the authors of [1].

We first perform PCA with the input point cloud.

# Compute the centroid

center = pnts.mean(1,True)

# Center the points

pnts_centered = pnts - center

# Compute covariance matrix

R = torch.bmm(pnts_centered.transpose(1,2),pnts_centered)

# Eigen-decomposition

lambdas,V_ = torch.symeig(R.detach().cpu(),True)

# Reshape the eigenvectors

F = V_.to(R).unsqueeze(1).repeat(1,pnts.shape[1],1,1)

Then, we can construct the rotation matrices by

# Write down permutations of alpha

ops = torch.tensor([

[1,1,1],

[1,1,-1],

[1,-1,1],

[1,-1,-1],

[-1,1,1],

[-1,1,-1],

[-1,-1,1],

[-1,-1,-1]

]).unsqueeze(1).to(point_cloud)

F_ops = ops.unsqueeze(0).unsqueeze(2) * Frame.unsqueeze(1)

Finally, we perform the preprocessing to the point cloud data by centering and computing the frame average.

pnts_centered = point_cloud - center

# Apply inverse of the frame operation

framed_input = torch.einsum('bopij,bpj->bopi',F_ops.transpose(3,4),pnts_centered)

# feed forward to the backbone neural network

# e.g. point net

outs = self.nn_forward(framed_input)

# Apply frame operation

outs = torch.einsum('bopij,bopj->bopi',F_ops,outs)

# Take the average

outs = outs.mean(1)

For a more detailed example application of frame averaging, please see the next blog post by our group: Frame Averaging for Invariant Point Cloud Classification.

[1] Omri Puny, Matan Atzmon, Heli Ben-Hamu, Ishan Misra, Aditya Grover, Edward J. Smith, and Yaron Lipman. Frame averaging for invariant and equivariant network design. In ICLR, 2022.