By: Zeltzyn Montes and Sneha Sambandam

Many of the datasets we would like to perform machine learning on have an intrinsic hierarchical structure. This includes social networks, taxonomies, NLP sentence structure, and anything that can be represented as a tree. While the most common machine learning tools work in the Euclidean space, this space is not optimal for representing hierarchical data.

When representing hierarchical data in the 2D space, we have two main objectives: to preserve the hierarchical relationships between parent and child nodes; and to ensure distances between nodes are somewhat proportional to the number of links in between. However, when representing this structure in the 2D Euclidean space, we run into a few limitations.

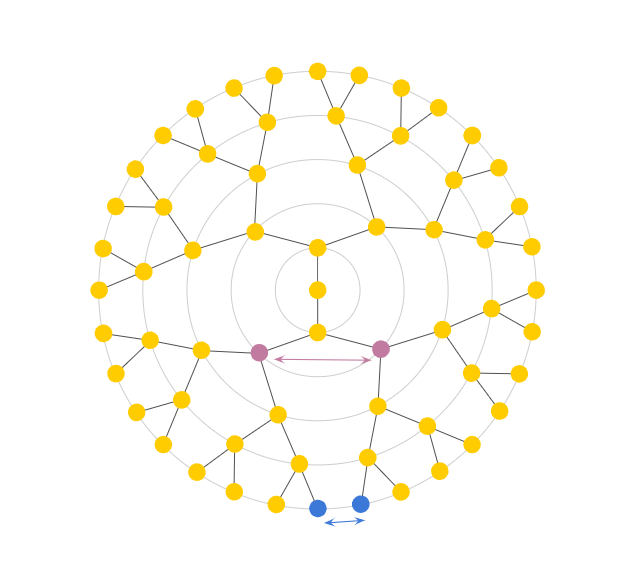

For example, let’s first draw a tree, with a branching factor of 2 (Fig 1). When our tree is very deep, placing nodes equidistantly causes us to run out of space rather quickly, like in our example above at only a depth of 5. The second problem we run into is with untrue distance relationships among nodes. To help visualize this phenomenon, refer to Fig 2a. Here, arc refers to the shortest path between two nodes.

Euclidean vs desired measurement of distance between nodes.

(Courtesy of Alison Pouplin)

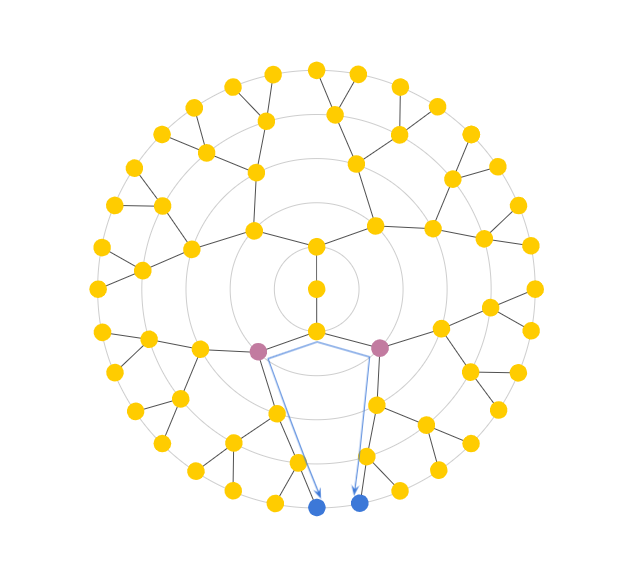

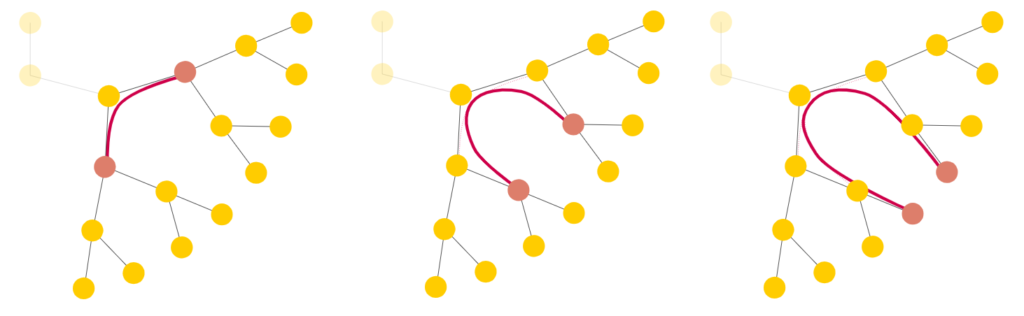

In theory, since the length of the arc between the purple nodes is less than that of the blue nodes, the purple nodes should result in the smaller distance. However, the Euclidean distance between the purple nodes is larger than the distance between the blue nodes. With the Euclidean 2D space, we observe that siblings in later generations end up closer and closer together, rendering Euclidean distance almost meaningless, as it only grows linearly with depth. Instead, we need a better distance measure: one that somehow travels “up” a tree before going back down as shown in Fig 2b and that is analogous to tree distance that grows exponentially as shown in Fig 3.

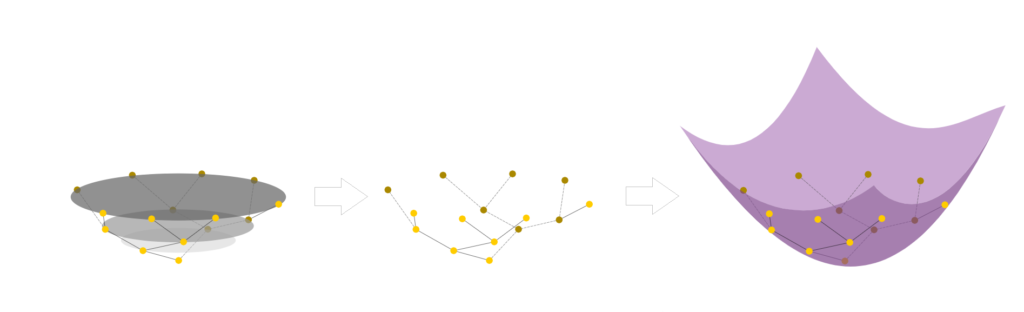

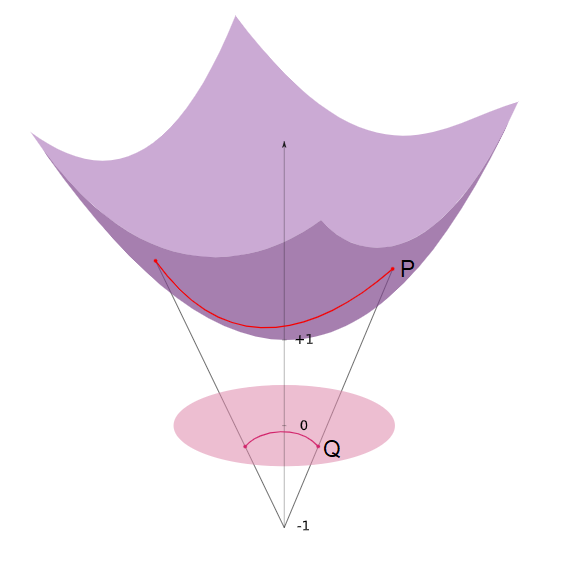

A solution for this is to add an extra dimension (Fig 4). Now, the tree follows a smooth manifold: a hyperboloid. A hyperboloid is a manifold with a negative sectional curvature in the Minkowski space, drawn by rotating a parabola around its symmetrical axis. Note, the Minkowski space is similar to the Euclidean space except dimension 1 is treated differently, while other dimensions are treated similarly to the Euclidean space.

Embedding the tree on a smooth manifold now allows us to compute geodesics (shortest distance between two points on a surface). We can also project the data onto a lower dimensional manifold, such as the Poincaré disk model. This model can be derived using a stereoscopic projection of the hyperboloid model onto the unit circle of the z = 0 plane (Fig 5).

The basic idea of this stereoscopic projection is:

- Start with a point P on the hyperboloid we wish to map.

- Extend P out to a focal point N = (0,0,−1) to form a line.

- Project that line onto the z = 0 plane to find our point Q in the Poincaré model.

The Poincaré disk model is governed by the Möbius gyrovector space in the same way that Euclidean geometry is governed by the common vector space (i.e., we have different rules, properties and metrics that govern this space). While we won’t go into the specifics of what constitutes a gyrovector space, we shall detail the properties of the Poincaré disk that make it advantageous for representing hierarchical data.

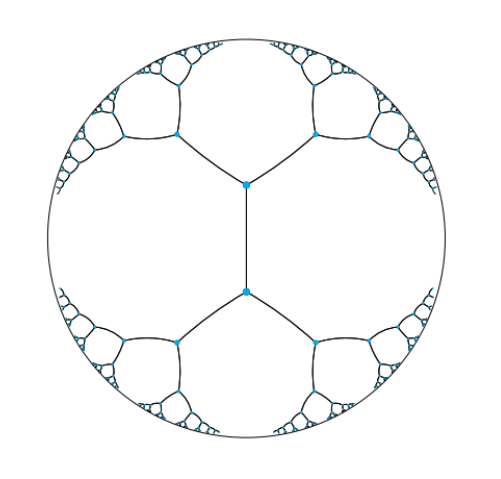

In Fig 6, we can see a hierarchical tree with branching factor two embedding into a Poincaré disk. Distances between all points are actually equal because distances grow exponentially as you move toward the edge of the disk.

Some notable points of this model are the following:

- The arcs never reach the circumference of the circle. This is analogous to the geodesic on the hyperboloid extending out to infinity, that is, as the arc approaches the circumference it’s approaching the “infinity” of the plane.

- This means distances at the edge of the circle grow exponentially as you move toward the edge of the circle (compared to their Euclidean distances).

- Each arc approaches the circumference at a 90 degree angle, this just works out as a result of the math of the hyperboloid and the projection. The straight line in this case is a point that passes through the “bottom” of the hyperboloid at (0,0,1).

With these properties we are able to represent data in a compact, visually pleasing and apt form using hyperbolic geometry. Current research explores how we can harness these properties in order to perform effective machine learning on hierarchical data.