For my first SGI blog, I have decided to combine what we have learned during the second day of the tutorial week given by the amazing Silvia Sellán to get an alternative version of the playing cards that we, fellows, have received in the swag package. The code can be found on SGI’s GitHub.

The cards are nicely designed already, so the goal is not to get a superior design but to put the knowledge we have collected into practice.

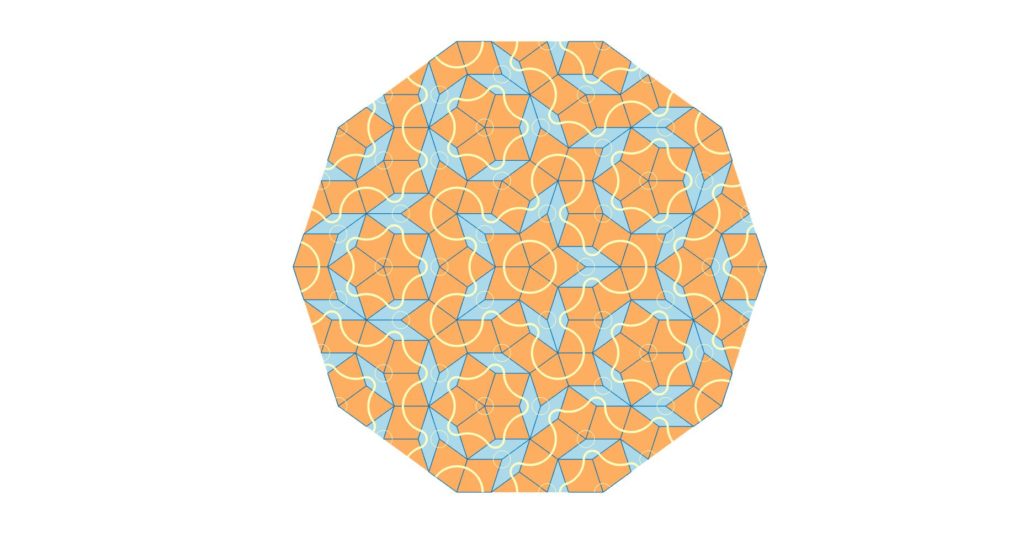

If you are curious about how the design was made by Mathworks, you can consult this website where they provide an explanation of the code that went into building the patterns. To briefly summarize the concept, the patterns were made using a Penrose Tiling, an aperiodic tiling that covers a surface with shapes such as triangles, polygons, etc. The Penrose Tiling is more distinct in larger surfaces where the tile’s repetition creates a distinct pattern.

The tiling in the deck of playing cards is much simpler than what the Penrose Tiling usually ends up looking like because the number of tiles used to divide the shapes is very small. The aim is to recreate a simple pattern using triangulation principles that we have covered during the tutorial week.

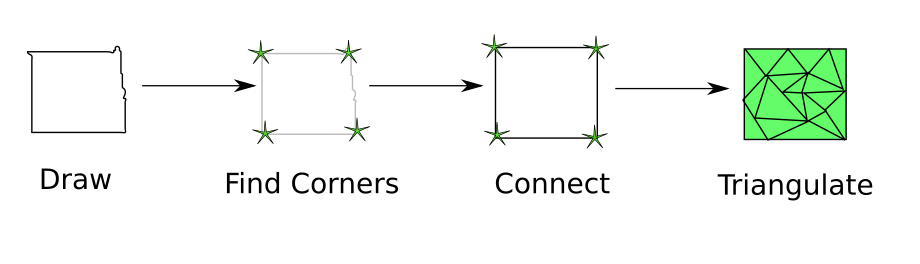

The steps making our approach, which we are going to detail in this post, can be summarized in the pipeline below:

The idea used to recreate the cards is based on two commands, one of them being the drawing tool from the gpltoolbox and the triangulation tool:

[V,E,cid] = get_pencil_curves(1e-6); (1)

[U,G] = triangle(V,E,[],'Flags','-q30'); (2)

To recreate the style of the playing cards, we will need to transfer the drawing obtained in (1) to a polyline-based design. The drawing from get_pencil_curve is never straight, so we have to detect the existing segments and estimate their positions.

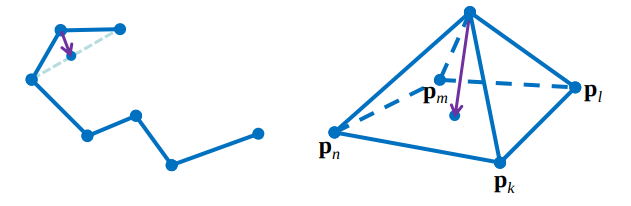

To achieve this polyline-based design, the position of the segment bounds and their connections are estimated which means that to recreate the polylines, we need to estimate the points that have corner properties and build the connections between these points.

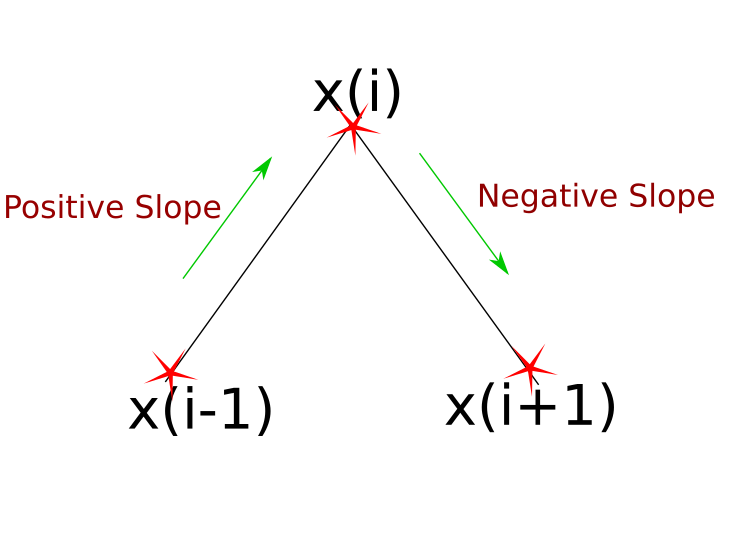

To execute the step that consists of detecting these corner points, we can use the script find_corners.m. The principle is simple: a corner point is a point \(x_{i}\) where the sign of the derivative changes when measured between \(x_{i}\)’s predecessor \(x_{i-1}\) and successor \(x_{i+1}\). The edge cases where vertical lines exist in the drawing and that yield to +/-Inf derivatives and the case of horizontal lines that have a zero derivative along the segment are also accounted for.

Points that satisfy this change in the slope’s sign will be considered corner candidates. At the end of the corner detection process, a pruning occurs to discard points that are very close to each other. Once we have these corner points, the polylines can be immediately seen as the connection between two consecutive corners.

Curvatures also naturally trigger this change in the slope’s sign. We do however need to distinguish between an intentional curvature and a non-intentional one caused by drawings which inherently have lines with varying degrees of curvature attributed to hand made drawings with the get_pencil_curve tool. This is solved by fixing a derivative threshold that discards very small variations.

When the polyline-based design is ready, we can run the triangulation tool as in (2) to obtain our resulting shapes.

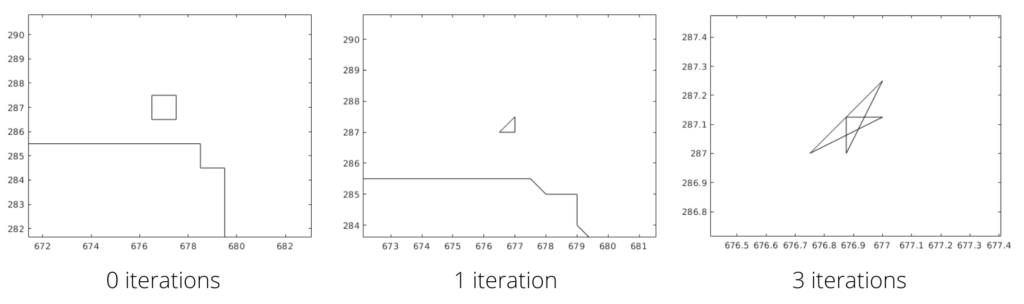

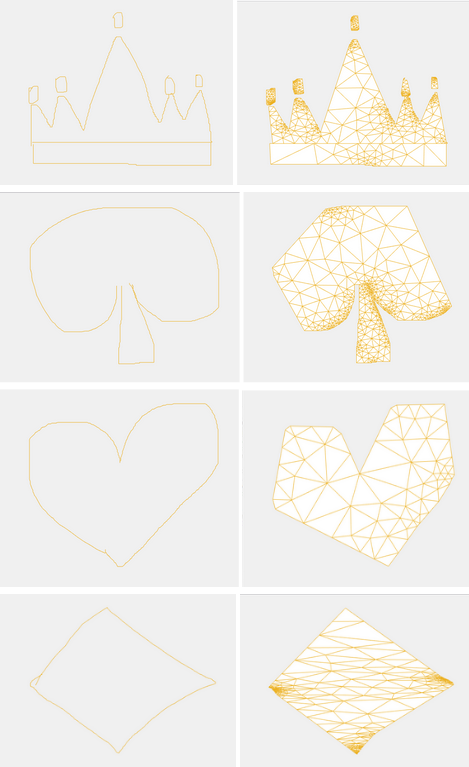

In the pictures below, we have collected the results from our algorithm which generated triangulated polyline versions of shapes created by users. The results are not perfect due to many irregularities in the original drawing. These irregularities stem from the low quality of the drawings when using a mouse instead of an actual pen.

Some interesting observations can be made from the results of the heart and spade shapes: the algorithm converts their curved lines into two straight lines which meet at a point that showcases corner properties which validates that strong curvatures are properly considered and the inevitable ones are discarded.

And with that, we can finally say that we have our own “artsy” playing cards design or maybe this could already be Mathworks’ design in a parallel universe!